Notice

Information Structures for Privacy and Fairness

- document 1 document 2 document 3

- niveau 1 niveau 2 niveau 3

Descriptif

The increasingly pervasive use of big data and machine learning is raising various ethical issues, in particular privacy and fairness.

In this talk, I will discuss some frameworks to understand and mitigate the issues, focusing on iterative methods coming from information theory and statistics.

In the area of privacy protection, differential privacy (DP) and its variants are the most successful approaches to date. One of the fundamental issues of DP is how to reconcile the loss of information that it implies with the need to preserve the utility of the data. In this regard, a useful tool to recover utility is the Iterative Bayesian Update (IBU), an instance of the famous Expectation-Maximization method from Statistics. I will show that the IBU, combined with the metric version of DP, outperforms the state-of-the art, which is based on algebraic methods combined with the Randomized Response mechanism, widely adopted by the Big Tech industry (Google, Apple, Amazon, …). Furthermore I will discuss a surprising duality between the IBU and one of the methods used to enhance metric DP, that is the Blahut-Arimoto algorithm from Rate-Distortion Theory. Finally, I will discuss the issue of biased decisions in machine learning, and will show that the IBU can be applied also in this domain to ensure a fairer treatment of disadvantaged groups.

Intervention / Responsable scientifique

Sur le même thème

-

Comment les machines apprennent ?

FerréArnaudANF TDM et IA 2025 | Comment les machines apprennent ? avec Arnaud Ferré (MaIAGE, INRAE)

-

Comment une IA peut-elle faire la conversation ?

FerréArnaudANF TDM et IA 2025 | Comment une IA peut-elle faire la conversation ? avec Arnaud Ferré (MaIAGE, INRAE)

-

Interagir efficacement avec un agent conversationnel

ChifuAdrian-GabrielANF TDM et IA 2025 | Comment interagir efficacement avec un agent conversationnel : prompt engineering et prompt hacking avec Adrian Chifu (LIS, Aix-Marseille Université)

-

Les modèles de langue : usages et enjeux sociétaux

GuigueVincentANF TDM et IA 2025 | Les modèles de langue : usages et enjeux sociétaux avec Vincent Guigue (MIA, AgroParisTech)

-

Déploiement d'IA sécurisées en local appliqué aux données sensibles et open source

SanjuanEricANF TDM et IA 2025 | Retour d’expérience sur le déploiement d'IA sécurisées en local (processus ETLs) appliqué aux données sensibles (santé) et open source (OpenAlex) avec Éric SanJuan (LIA, Avignon

-

Interroger ses documents avec des grands modèles de langage : méthode RAG

PoulainPierreANF TDM et IA 2025 | Interroger ses documents avec des grands modèles de langage : découverte de la méthode RAG avec Pierre Poulain (Laboratoire de Biochimie Théorique, Université Paris Cité)

-

ML-enhancement of simulation and optimization in electromagnetism

PestourieRaphaëlFull-wave simulations of large-scale electromagnetic devices — spanning thousands of wavelengths while featuring sub-wavelength geometrical details — pose significant computational challenges….

-

Des opérateurs de neurones aux modèles de fondation : Scientific Machine Learning pour les phénomèn…

LehmannFannyLe Scientific Machine Learning a émergé comme un domaine à la frontière entre apprentissage automatique et simulation numérique pour construire des méta-modèles innovants...

-

Impact des techniques neuronales pour la microscopie computationnelle

DorizziBernadetteGottesmanYaneckCet exposé s'attachera à décrire l'intérêt des techniques de réseaux de neurones pour la microscopie computationnelle…

-

Quel est le prix à payer pour la sécurité de nos données ?

MinaudBriceÀ l'ère du tout connecté, la question de la sécurité de nos données personnelles est devenue primordiale. Comment faire pour garder le contrôle de nos données ? Comment déjouer les pièges de plus en

-

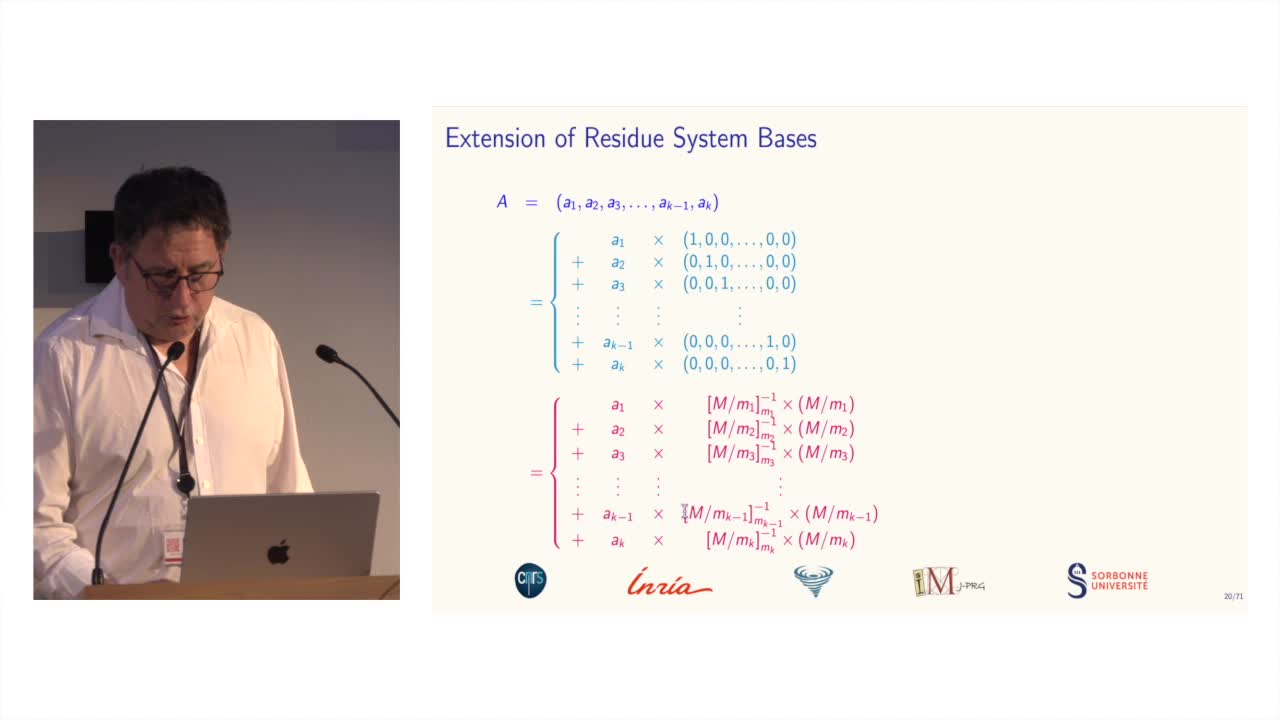

Des systèmes de numération pour le calcul modulaire

BajardJean-ClaudeLe calcul modulaire est utilisé dans de nombreuses applications des mathématiques...

-

Soirée de présentation de l'ouvrage "Le parfait fasciste"

De GraziaVictoriaLebrunJeanPrésentation de l'ouvrage "Le parfait fasciste", de Victoria de Grazia, présenté par Jean Lebrun