Notice

Action recognition from video: some recent results

- document 1 document 2 document 3

- niveau 1 niveau 2 niveau 3

Descriptif

While recognition in still images has received a lot of attention over the past years, recognition in videos is just emerging. In this talk I will present some recent results.

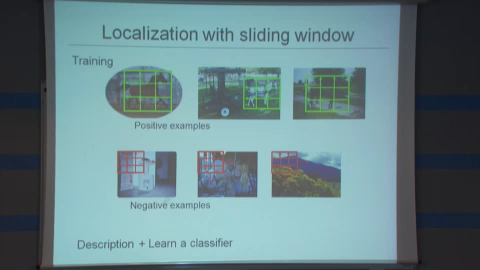

Bags of features have demonstrated good performance for action recognition in videos. We briefly review the underlying principles and introduce trajectory-based video features, which have shown to outperform the state of the art. These features are obtained by dense point sampling in each frame and tracking them based on displacement information from a dense optical flow field. Trajectory descriptors are obtained from motion boundary histograms, which are robust to camera motion.

We then show how to integrate temporal structure into a bag-of-features model based on so-called actom sequences. We localize actions based on sequences of atomic actions, i.e., represent the temporal structure by sequences of histograms of actom-anchored visual features. This representation is flexible, sparse and discriminative. The resulting model is shown to significantly improve performance over existing methods for temporal action localization. Finally, we show how to move towards more structured representations by explicitly modeling human-object interactions. We learn how to represent human actions as interactions between persons and objects. We localize in space and track over time both the object and the person, and represent an action as the trajectory of the object with respect to the person position, i.e., our human-object interaction features capture the relative trajectory of the object with respect to the human. This is shown to improve over existing methods for action localization.

Thème

Documentation

Liens

Colloquium Jacques Morgenstern

Le but du colloquium est d’offrir une vision d’ensemble des recherches les plus actives et les plus prometteuses dans le domaine des Sciences et Technologies de l’Information et de la Communication (STIC). Nouveaux thèmes scientifiques

Avec les mêmes intervenants et intervenantes

-

Interprétation de contenus d'images (Visual object recognition) : 1ère partie

SCHMID Cordelia

Dans la première partie de ce cours nous introduisons des descripteurs d'image robustes et invariants ainsi que leur application à la recherche d’images similaires. Nous expliquons ensuite comment

-

Interprétation de contenus d'images (Visual object recognition) : 2eme partie

SCHMID Cordelia

Dans la première partie de ce cours nous introduisons des descripteurs d'image robustes et invariants ainsi que leur application à la recherche d’images similaires. Nous expliquons ensuite comment

Sur le même thème

-

Le projet Affinity - Evaluer le comportement des personnes avec TSA en présence de leur affinité

CHéREL Myriam

BAYOU-OUTTAS Meriem

FOURNIER Julie

BUCHER Emma

MANN Pauline

A travers cette série d'interviews, le LabEx vous invite à découvrir le projet Affinity qui s'intéresse à la relation entre les personnes atteintes d'un trouble du spectre de l'autisme et leurs

-

Enseignement et Innovation #18 – Viser l’évaluation pour apprendre : retour d’expériences sur l’évo…

SEBASTIEN Didier

KOLINSKY Corinne

Enseignement et Innovation #18 – Viser l’évaluation pour apprendre : retour d’expériences sur l’évolution des pratiques d’une enseignante

-

En route vers une digitalisation des Universités

PORLIER Christophe

Les causeries de la pédagogie - Enseigner en ligne 1

-

La classe moderne : retour d'expérience

NAGELS Adline

SEBASTIEN Didier

Causeries de la pédagogie, Enseignement et innovation 9

-

La classe moderne

SEBASTIEN Didier

NAGELS Adline

Causeries de la pédagogie, Enseignement et innovation 8

-

Sketchnoting : mode d'emploi

Réviser ou faire une synthèse n'est pas toujours aisé pour les apprenants... et s'il existait une méthode pour faire baisser le coût à l'engagement de ce type de tâche ?

-

Environnements virtuels et immersifs: retours d’expériences avec les étudiants du Lab Vivant Odyssée

VILLIOT-LECLERCQ Emmanuelle

Présentation orale de travaux de recherche par Emmanuelle VILLIOT-LECLERCQ (Grenoble École de Management) lors de Screen Day 2022.

-

Jouets futiles et sérieux de la Renaissance

Si les jeux à la Renaissance ont fait l’objet d’un beau colloque du Centre de la Renaissance en 1982, sous la direction de Philippe Ariès et André Stegman, et si les jeux de hasard, massivement

-

La socialisation de genre par les jouets

De l’amplification de la segmentation de genre dans le marketing du jouet aux luttes féministes connectées et stratégies des parents La segmentation de genre dans le marketing du jouet s’est

-

Co-enseignement et difficulté scolaire dans le premier degré : penser le lien d'un point de vue did…

Les organisations pédagogiques qui permettent à plusieurs enseignants d’intervenir sur un même groupe classe sont largement valorisées par les équipes dans le traitement de la difficulté scolaire et

-

La forme de la phrase en français de tous les jours

LARRIVéE Pierre

La variation de registre langagier a un impact sur la forme même de l’élément central du langage qu’est la phrase. Dans cette vignette, je discute deux caractéristiques de la phrase du français de

-

Unique en son genre

La mixité est officialisée depuis 1975 dans le système scolaire français. Pourtant, le succès des filles à l'école dans toutes les disciplines n'a pas remis en cause leur absence dans de nombreuses